The Leadership Control Trap

Why your instinct to manage AI is the thing that is preventing you from benefiting from it.

Mark Cameron

Most organisations are now experimenting with AI. The technology is accessible, the use cases are well documented, and the pressure from boards and markets is real. Yet the results, for the vast majority, are underwhelming.

Research from BCG found that only 4% of organisations have created substantial value from AI. McKinsey’s global survey tells a similar story: 78% are using AI in at least one function, but fewer than 1% describe their rollouts as mature. The gap between these numbers is enormous, and it is not explained by technology. The tools are largely the same. The models are available to everyone. The difference is organisational.

The question that should be keeping leaders awake is not “how do we adopt AI faster?” It is “why is our organisation unable to absorb the capability that is already available to us?”

The answer, in almost every case I encounter in my advisory work, is the same. It is not the technology. It is how leaders respond to the uncertainty that the technology creates.

The instinct that makes things worse

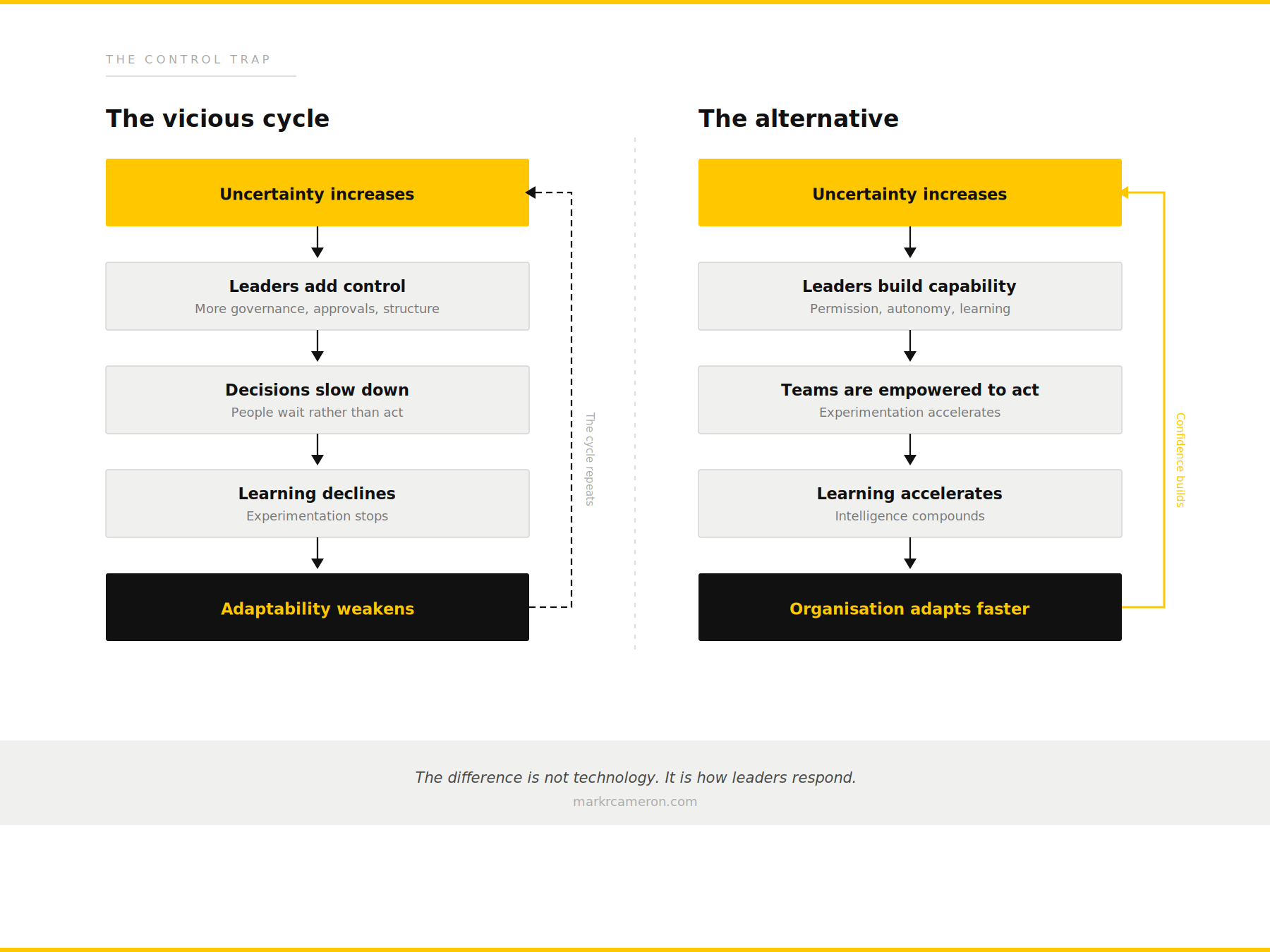

When the environment becomes less predictable, leaders instinctively try to regain control. This is not a character flaw. It is a deeply rational response shaped by decades of operating in stable, process-driven organisations where control was synonymous with good management.

The response looks like responsible leadership. More governance frameworks. More approval layers. More steering committees. More risk assessment before action. More senior sign-off before experimentation. Each of these feels individually reasonable. Taken together, they create a system that is structurally incapable of learning at the speed the environment demands.

This is what I call the Control Trap, and it operates as a self-reinforcing cycle.

The cycle is invisible to those inside it because every individual decision feels correct. The governance framework is sensible. The approval process is thorough. The risk assessment is responsible. But the cumulative effect is an organisation that has optimised itself for certainty in a world that no longer provides it.

The organisations I see struggling with AI are rarely struggling with the technology. They are struggling with the fact that their entire operating logic was designed for a different kind of environment, and the leaders running them are responding to disruption with the only playbook they have ever known.

The end of optimisation as strategy

For decades, competitive advantage came from doing known things more efficiently. Scale, process discipline, execution rigour. These were the hallmarks of well-run organisations, and the management frameworks that supported them were extraordinarily effective. Lean manufacturing, Six Sigma, business process re-engineering, continuous improvement. They all share an underlying assumption: the work is knowable, repeatable, and can be decomposed into steps that can be measured and refined.

AI breaks this assumption.

It does not just make existing processes faster. It expands what is possible. It creates new categories of value. It shifts the competitive terrain from “who can execute best” to “who can learn fastest.” And crucially, it renders the optimisation playbook insufficient, because you cannot optimise your way to intelligence. You cannot Six Sigma your way into a learning organisation.

They are applying industrial-era management logic to an intelligence-era challenge.

The distinction matters because it determines where investment goes, how success is measured, and what leaders pay attention to. An automation lens leads to pilot projects, efficiency gains, and incremental improvement. A redesign lens leads to structural change in how decisions are made, how knowledge flows, how teams operate, and how the organisation learns.

Return on Learning

If optimisation is no longer the primary source of advantage, what replaces it?

The answer, I believe, is learning velocity: how quickly an organisation can detect change, interpret what it means, act on it, and evolve its operations based on what it discovers.

I call this Return on Learning, and it is a deliberate subversion of the ROI language that dominates executive thinking. Most AI business cases are structured around cost reduction and efficiency improvement. They measure the return on a technology investment. Return on Learning measures something more fundamental: the rate at which the organisation builds new capability.

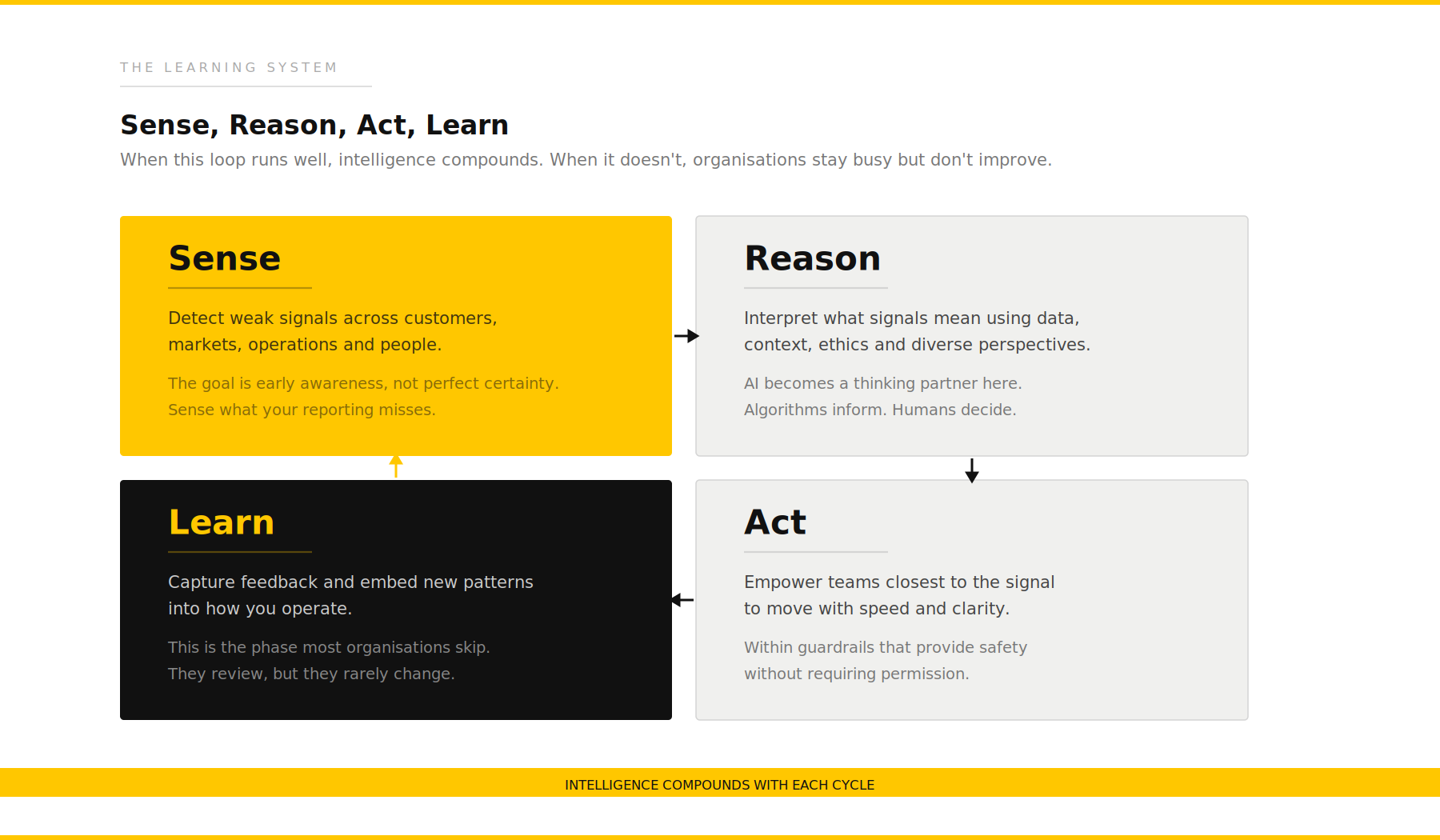

Learning velocity has four components, and they operate as a continuous cycle.

Sense: Detect weak signals across customers, markets, operations and people. The goal is early awareness, not perfect certainty. Most organisations only sense what their reporting infrastructure tells them. High-performing organisations sense what their reporting misses.

Reason: Interpret what those signals mean. This is where data, context, experience and judgement converge. It is also where AI becomes genuinely powerful, not as a decision-maker, but as a thinking partner.

Act: Empower teams closest to the signal to move with speed and clarity, within guardrails that provide safety without requiring permission. The Control Trap breaks this phase first.

Learn: Capture feedback and embed new patterns into how the organisation operates. Not just reviewing what happened, but changing processes, policies, models and mental models based on what was discovered.

When this cycle runs effectively, intelligence compounds over time. The organisations in that top 4–5% have not simply adopted more AI tools. They have built operating models that accelerate this cycle.

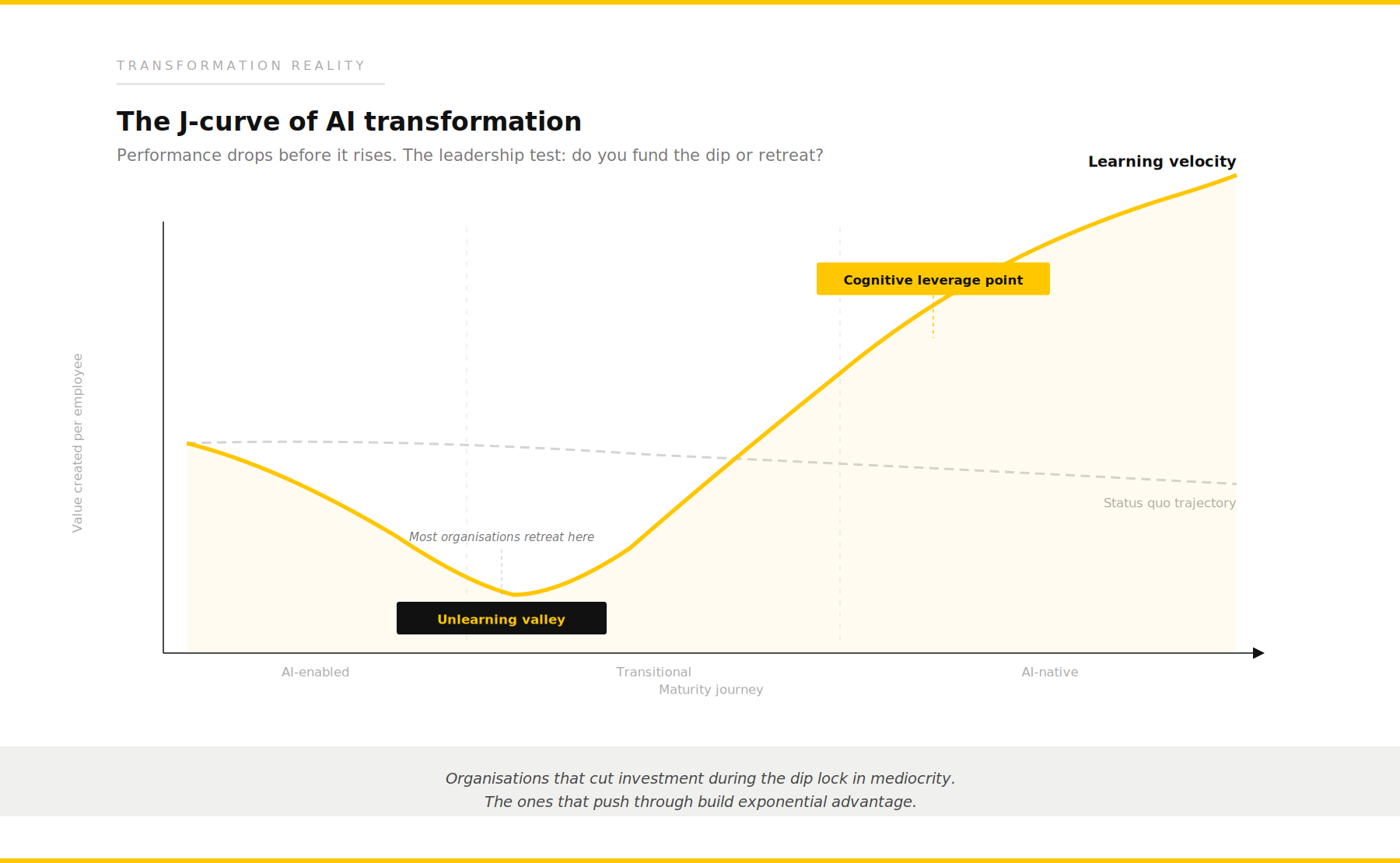

The J-curve and the leadership test it creates

There is an uncomfortable truth about this transition that most consulting frameworks gloss over: it gets worse before it gets better.

When organisations begin to shift from process-centred to intelligence-centred operations, they enter what I call the Unlearning Valley. Productivity dips. Teams are learning new tools while still delivering on existing commitments. This creates a J-curve. Performance drops before it rises.

The leadership test is straightforward: do you fund the dip or do you retreat?

Most organisations retreat. They see declining metrics during the transition, interpret it as evidence that the investment is not working, and pull back to what they know. The McKinsey and BCG data tells us this is the most common failure mode.

The organisations that break through are the ones whose leaders understand the J-curve, communicate it to their boards, and deliberately fund the transition period. They accept short-term disruption as the cost of long-term capability. They measure different things during the valley: learning rate, experimentation yield, decision latency.

This is genuinely difficult. Quarterly reporting cycles punish leaders who invest in capability over efficiency. Boards want measurable returns on visible timelines. And yet the data is clear: organisations that cut investment during the dip lock in mediocrity. The ones that push through build exponential advantage once the cycle stabilises, because learning velocity compounds in a way that efficiency never can.

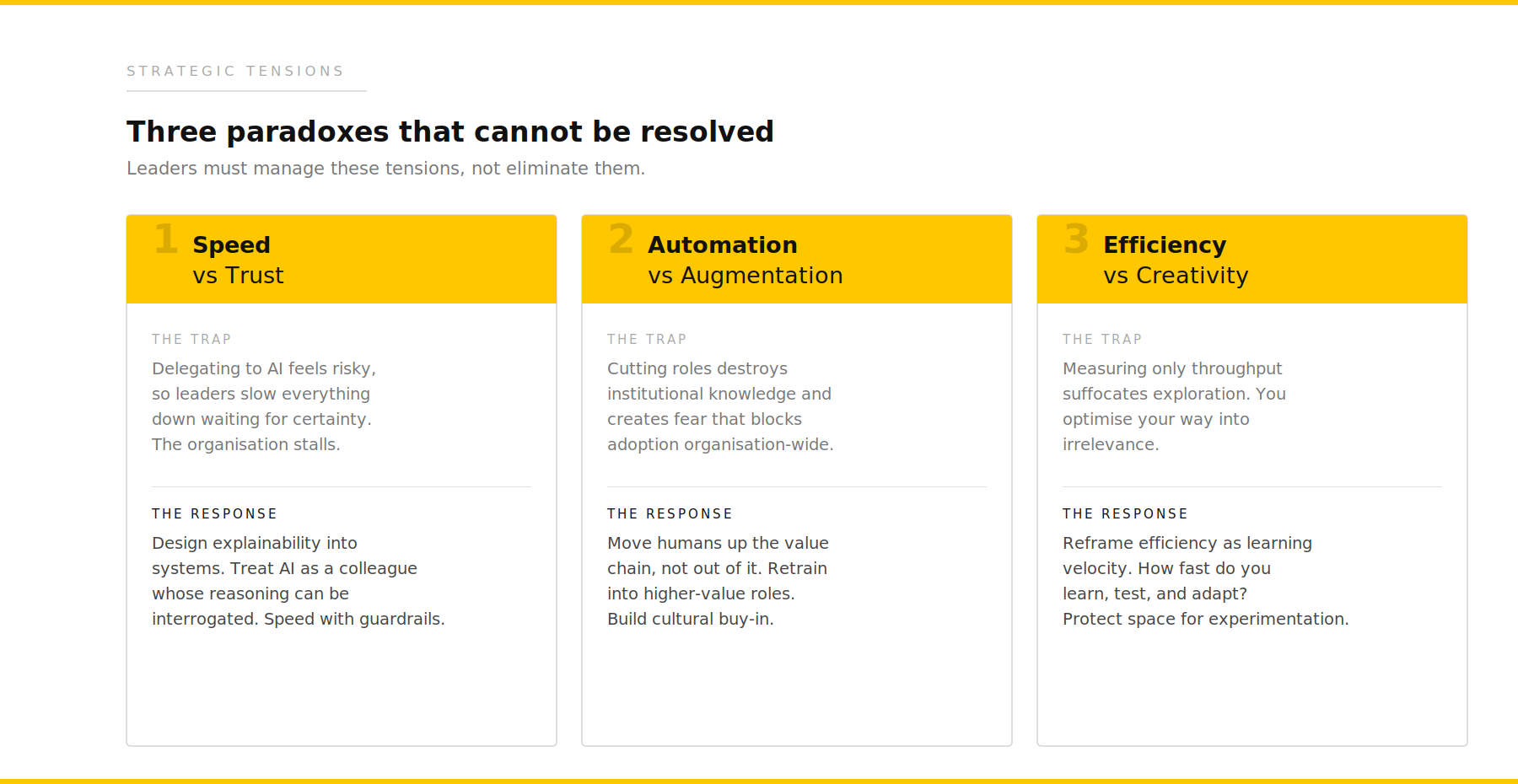

Three paradoxes that cannot be resolved

AI transformation surfaces strategic tensions that leaders must manage rather than resolve. Pretending these tensions do not exist is how organisations get blindsided.

Speed versus trust. Delegating decisions to AI feels risky, so leaders slow everything down waiting for certainty. The response is not to eliminate risk, but to design explainability into systems. Speed with guardrails, not speed without accountability.

Automation versus augmentation. Cutting roles looks efficient in the short term. It destroys institutional knowledge and creates fear that blocks adoption. The response is to move humans up the value chain, not out of it.

Efficiency versus creativity. Measuring only throughput suffocates exploration. You optimise your way into irrelevance. The response is to reframe efficiency as learning velocity.

Each of these paradoxes creates real tension in executive decision-making. The skill is holding both sides simultaneously and designing systems that can operate with the contradiction rather than pretending it away.

What separates the best leaders

What I have observed, consistently, across twenty years of advisory work and across sectors as different as government, healthcare, education, utilities and financial services, is that the leaders who navigate this well

share one quality that has nothing to do with their technical knowledge.

They recognise their own patterns.

In moments of pressure, most leaders fall back on what they know. Proven approaches, familiar frameworks, past experience. It feels efficient. It feels safe. But in a rapidly changing environment, it quietly limits what is possible, because the past is no longer a reliable guide to the future.

The leaders who succeed are different. They notice when they are defaulting to what is comfortable rather than what is required. They catch themselves adding a governance layer when the real need is permission to experiment.

This is metacognition: the ability to think about your own thinking. It is the most undervalued leadership capability of our time, and AI makes it both more important and more accessible.

Leaders need to think with AI, not just about AI.

Thinking about AI is strategic planning: where to invest, what to adopt, how to govern. Thinking with AI is something fundamentally different. It is using AI as a partner in your own reasoning. Exploring scenarios you would not have considered alone. Having your assumptions challenged by a system that has no political incentive to agree with you.

The leaders I see getting the most from AI are not the ones with the best technology strategies. They are the ones who have changed how they think, and who are building organisations designed to do the same.

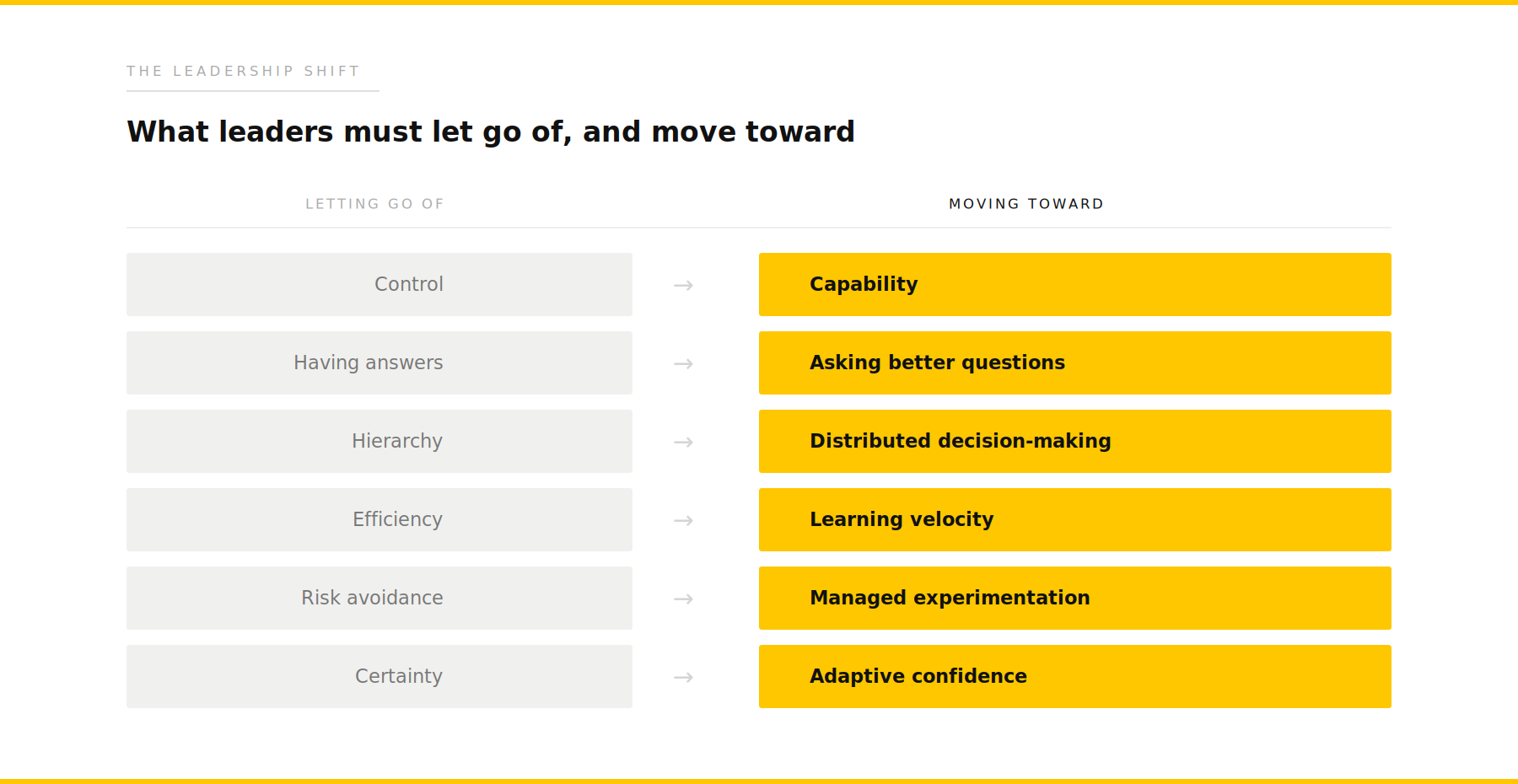

The role of the leader is changing

This is not about removing structure. It is about creating the conditions for better thinking, faster learning and more effective action.

A different kind of challenge

The organisations that succeed in the intelligence era will not be the ones that adopt AI fastest. They will be the ones that rethink how they operate. That starts with leaders who are willing to examine their own assumptions, resist the instinct to add control when the world feels uncertain, and build organisations that are structurally capable of learning at the speed of change.

The technology is not the hard part. The hard part is you.

If that provocation feels uncomfortable, it is probably worth a conversation.